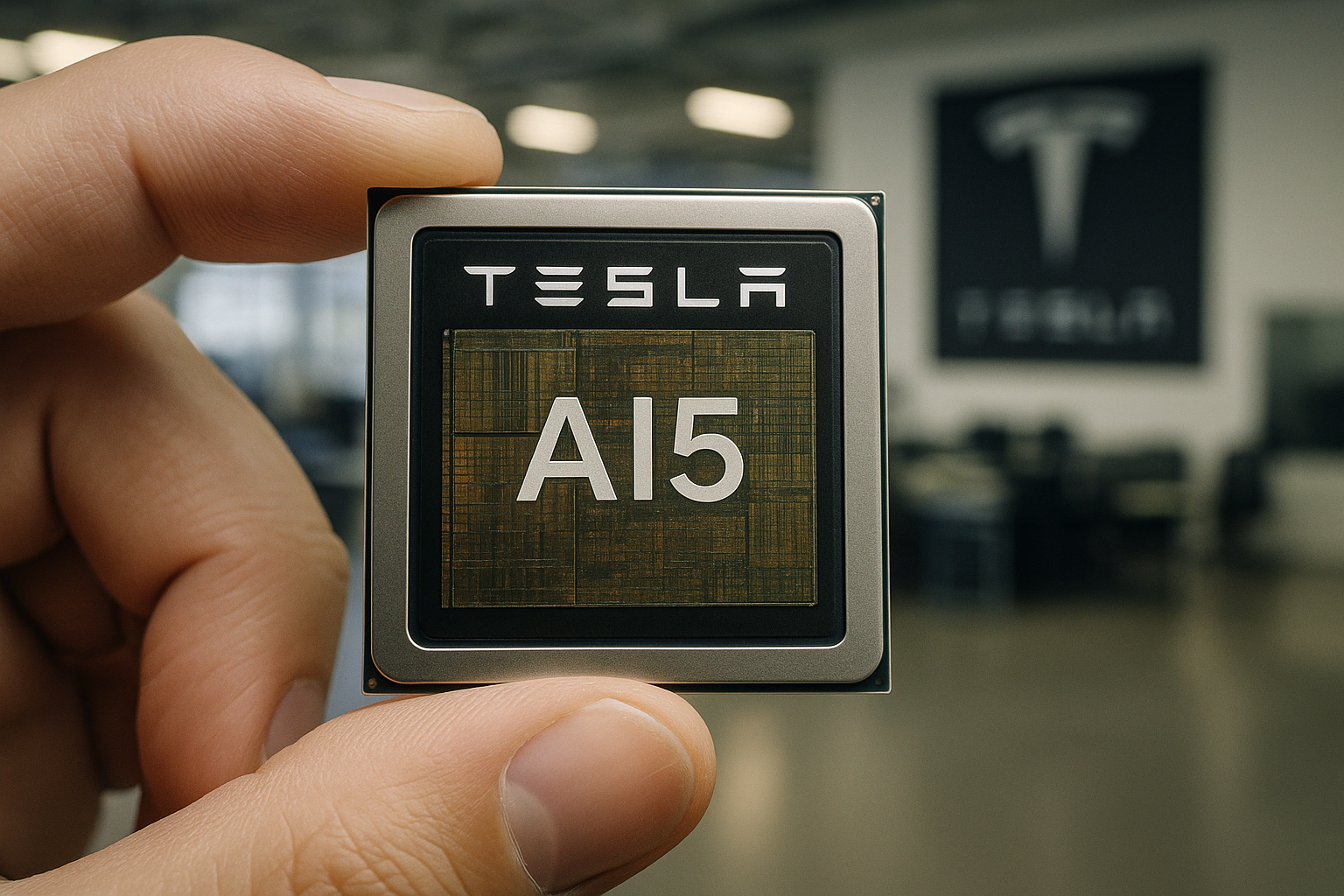

Tesla CEO Elon Musk announced on April 15, 2026, via X that the company has taped out its AI5 chip—the critical next-generation inference processor for its Full Self‑Driving (FSD) system, Optimus robots, and xAI data centers. Musk touted a performance improvement of up to 40× over the current AI4 chip and confirmed that AI6 and Dojo3 are already in development. (techradar.com)

Tom’s Hardware reports that the AI5 module is approximately half the reticle size of AI4, surrounded by 12 SK hynix memory packages—likely GDDR6 or GDDR7—suggesting a 384‑bit memory interface and bandwidth between 768 GB/s and 1.536 TB/s. (tomshardware.com)

Tesla is pursuing a dual‑foundry strategy: both TSMC and Samsung will manufacture AI5, with each producing slightly different physical versions of the same design. Musk emphasized that Tesla’s AI software will run identically on both variants. (tomshardware.com)

Multiple sources indicate that small‑scale production of AI5 is expected in late 2026, with mass production ramping in mid‑2027. (notateslaapp.com)

The AI5 chip is designed to deliver a step‑function improvement in compute and memory over AI4—estimates range from 10× to 50× performance gains, depending on the metric. (opencall.news)

This milestone also reignites Tesla’s Dojo supercomputer ambitions: with AI5 nearing readiness, Tesla has restarted work on Dojo3, its next-generation training system. (techradar.com)

Tesla’s AI5 chip represents a major leap in its vertical integration strategy—designing custom silicon tailored for its AI workloads, from autonomous driving to robotics and AI infrastructure.